With the increase of intelligent perception configurations such as high-performance CMOS imaging and lidar in automobiles, the level of autonomous driving of automobiles is constantly improving, while reducing traffic accident casualties and improving road safety. Onsemi, which is ahead of intelligent power supply and intelligent perception technology, provides a full range of intelligent perception solutions, including image sensors, ultrasonic waves, lidar and sensor fusion. Its image sensors have more than 80% of the market share, and has the advantages of high resolution, high dynamic range (HDR), LED anti-flicker (LFM), safety and reliability, etc., plus a comprehensive product portfolio, including power management, lighting solutions, motor drivers, system design experts Knowledge, reference designs, powerful and flexible development kits, experienced application support, key components compliant with ISO-26262/ASIL standards, and an extensive partner ecosystem, support safe ADAS and L2+ level autonomous driving, and can expect Nine lives were saved, and a total of 81,000 lives are expected to be saved each year.

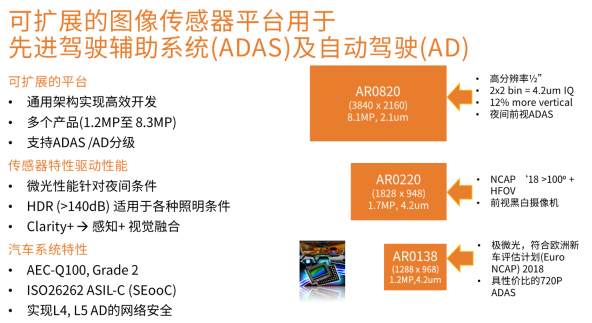

Scalable Image Sensor Platform: Reduces Development Time, Reduces Design Risk, and Meets Evolving Automotive Safety Requirements

ON Semiconductor’s scalable image sensor family includes a variety of products from 1.3 megapixels to 8.3 megapixels, with excellent low-light performance and a high dynamic range of >140 dB, Clarity+ realizes the fusion of perception + vision, and is AEC compliant -Q100 automotive specification and ISO26262 ASIL-C, adopting a common architecture, enabling automotive OEMs to make full use of the software and algorithms of existing devices to achieve efficient development. Among them, the 4.2 um AR0138 is an ideal choice for cost-effective 720P ADAS cameras, ultra-low-light, in line with the European New Car Assessment Program (Euro NCAP); the 4.2 um AR0220 has a slightly higher resolution than the AR0138; the AR0820 has a higher resolution Up to 8.3 million pixels, suitable for night-time forward-looking ADAS and higher-level automatic driving.

AR0820 High Resolution DR-Pix

High resolution is important for autonomous driving. Because higher resolution enables the system to detect and recognize smaller objects. This improves driving safety by detecting objects and other hazards at greater distances, alerting drivers and taking action sooner. This is driven by the need for self-driving cars to see farther and have the ability to detect objects of all shapes and sizes. AR0820 is an 8.3-megapixel, 2.1 um DR-Pix wafer-stacked automotive sensor with a perception capability 100 times that of human vision under all conditions. Wafer stacking technology enables compact camera design.

High Dynamic Range (HDR) is a key and important parameter for camera sensors, and is the ability of the sensor to see between the darkest and brightest parts of a scene in the same frame without over- or under-saturation. In other words, the sensor needs to be immune to changes in ambient light conditions, such as sudden exposure to bright sunlight or reflections, or driving in/out of tunnels during the day. The AR0820 provides a high dynamic range of >140 dB up to 185 meters, suitable for the harshest lighting conditions, and can be used safely and reliably in autonomous driving applications. NIR+ technology enables enhanced near-infrared imaging for best-in-class low-light performance, especially at night.

As connected vehicles grow, it will also be important to add cybersecurity at the sensor level. AR0820 integrates hardware security module, secure boot video authentication and control encryption to ensure reliable and secure operation.

It is worth mentioning that AutoX, the leader of L4 unmanned taxis (RoboTaxi) in China, has adopted 28 AR0820s in its fifth-generation RoboTaxi to achieve high-resolution camera fusion with other sensors.

Hayabusa Series: Super Exposure Technology Solving Challenges in the World of Automotive Imaging

The world of automotive imagery often faces the following challenges: Real-world scenes often reach 120-140 dB, temperature range is below the extreme conditions of -40C to +105C, to be able to detect pedestrians or cyclists on night streets to comply with European new cars Assessment Program (NCAP), and traffic lights/LED signs.

Hayabusa solves the above-mentioned challenges of extreme temperature conditions, high dynamic range, LED anti-flicker, functional safety and NCAP evaluation standards. Range (HDR) while suppressing LED flicker, using back-illuminated (BSI) technology, and complying with AEC-Q100 Grade 2 and ISO26262 ASIL-B, suitable for ADAS, surround view (SVS), reversing (RVC), car mirror, automatic Driving and other market segments.

Among them, AR0233AT is a 2.6-megapixel 1/2.5-inch BSI HDR automotive sensor, which uses super-exposure 3.0 um BSI pixels (HDR pixel technology previously used in high-end film industry), with excellent low-light performance and exposure control Programmable flexibility, dynamic range > 95 dB for single exposure, dynamic range > 140 dB for multiple exposures, 1080p images at 60 fps in 120 HDR+LFM mode, on-chip HDR reduces system cost, in addition, it has low noise, low Power consumption architecture system, functional safety is in a leading position, and the output interface reaches 24-bit RAW.

Hayabusa Super Exposure Pixel Concept

In car headlights, taillights, and traffic signs, more power-saving pulsed LED lighting is usually used. In bright scenes, the short-term exposure of the sensor will cause light pulses to be lost and the image to flicker. ON Semiconductor develops and owns the intellectual property (IP) of super-exposure pixels and large and small pixel architectures. The company believes that super-exposure pixels are the best choice for vision and ADAS functions by testing actual products in automotive application environments and exposure time ranges. Determine the right balance point to ensure excellent super-exposure pixel image/video quality (noise, color, sharpness and detail), combined with the required ability to suppress LED flicker. Super-exposure pixels are easier to expand to smaller sizes, and there is no serious pixel crosstalk disadvantage of large and small pixel structures, providing more advantageous manufacturing costs for camera designs, reducing lens costs and production accuracy requirements.

Hayabusa’s super-exposure pixels are 5 times the electronic capacity of ordinary pixels. Long-time exposure captures pulsed light without oversaturation. It is optimized for human vision + machine vision, supports >95 dB dynamic range while avoiding flicker or motion artifacts (>100ke – full well capacity), up to 60 fps and by adding a very short T2 (~1/64 – ~1/256 ratio) to achieve over 120 dB dynamic range and minimize HDR+LFM motion artifacts.

Performance Evaluation: AR0233 VS Competitive Devices

· SNR in the transition area of multi-frame merging: The noise in the transition area of image fusion, that is, the minimum value of the V word where the signal-to-noise ratio drops, is an important factor when evaluating HDR performance. This signal-to-noise ratio minimum is sensitive to temperature and further decreases with increasing temperature. Competitors have higher noise in low light. AR0233 shows higher dynamic range; competitors are limited by fixed optical PD ratio. Due to higher sensitivity, AR0233 has higher SNR in low light, and can maintain SNR > 30dB in bright HDR scenes; competing products are limited by small pixel noise, which has dropped to 20 dB, and the higher the temperature, the greater the SNR drop Too much, and the dynamic range is reduced, resulting in poor performance.

· HDR+LFM: Dynamic range, which is the range from low noise (SNR = 1) in low light to the signal saturation point of the HDR frame, expressed in decibels. Flicker is defined as the on and off of a pulsed light source; limiting the minimum exposure time to be greater than the minimum pulse period can suppress flicker. To measure the ability to suppress LED flicker, you can measure the modulation results output by the image sensor when LED pulses of different frequencies are input. In testing, the competitor has less dynamic range (saturation of traffic lights and headlights) and no on/off flickering in both sensor images (speed limit signs and taillights).

Motion Artifacts: The degree to which objects in moving scenes smear and the timing of HDR multi-frame captures differs, ultimately leading to “ghosting” of motion in images. Unlike the previous generation 3-exposure HDR, Super Exposure+T2 provides minimal motion artifacts and natural blur, with an exposure time as short as 1 microsecond. Large and small pixel-based schemes do not exhibit this subtle ghosting, but produce artifacts as described earlier.

Focus on real-time functional safety

Image sensors are the eyes of the vehicle. Functional safety detection is critical to system evaluation and response. Real-time safety mechanisms enable the system to evaluate the response of each frame, enabling safer designs. ON Semiconductor’s automotive image sensors put real-time functional safety first. In more than ten years of accumulation, ON Semiconductor has collected more than 8,000 failure images and created a fault image library. The algorithm platform can perform fault modeling, simulation enabling algorithm verification and system behavior analysis based on the database.

Summarize

Intelligent perception solutions are at the heart of advanced safety and autonomy functions, and as vehicles evolve to more advanced safety and autonomy, improved performance of perception solutions is opening the way for higher levels of safety and autonomous driving functions. As the largest supplier of automotive image sensors, ON Semiconductor provides sensors with industry-leading high dynamic range, LED anti-flicker (LFM) and low-light sensitivity, which meet automotive regulations and focus on functional safety, such as the latest AR0820 and AR0233, to solve Automotive imaging challenges, together with perception solutions such as ultrasonic, lidar and sensor fusion, make roads safer, and a highly scalable imaging platform, plus power management, lighting solutions, motor drivers, reference designs and development kits, etc., enable Developing new products is more flexible and efficient.